- Pre-push hooks: Unit tests for changed files only. Fast, targeted, catches regressions immediately.

Tools: Husky for git hooks, lint-staged for running linters on changed files only, tsc --noEmit for TypeScript type checking.

What this prevents: Every PR that reaches code review already passes type checks, linting, and unit tests. This saves reviewers from commenting on issues that machines should catch. In teams we've worked with, pre-commit hooks reduce code review comments by 25-30%.

3. Code review with quality focus

Code review is a testing activity. Most teams treat it as a correctness check. It should also be a quality check.

Add to your code review checklist:

- Are there tests for the new code? Do they test behavior, not implementation?

- Are error paths handled? What happens when the API call fails, the database is down, or the input is invalid?

- Does this code change existing behavior? If so, are existing tests updated?

- Is the code testable? (Code that's hard to test is usually poorly designed.)

- For AI-generated code: does it follow the project's architecture patterns?

Why it works in practice: We've analyzed defect data from teams before and after implementing quality-focused code reviews. The defect escape rate drops 30-40% within two months. The initial overhead of longer reviews pays for itself within the first sprint through fewer bugs reaching later stages.

4. Automated testing in CI/CD

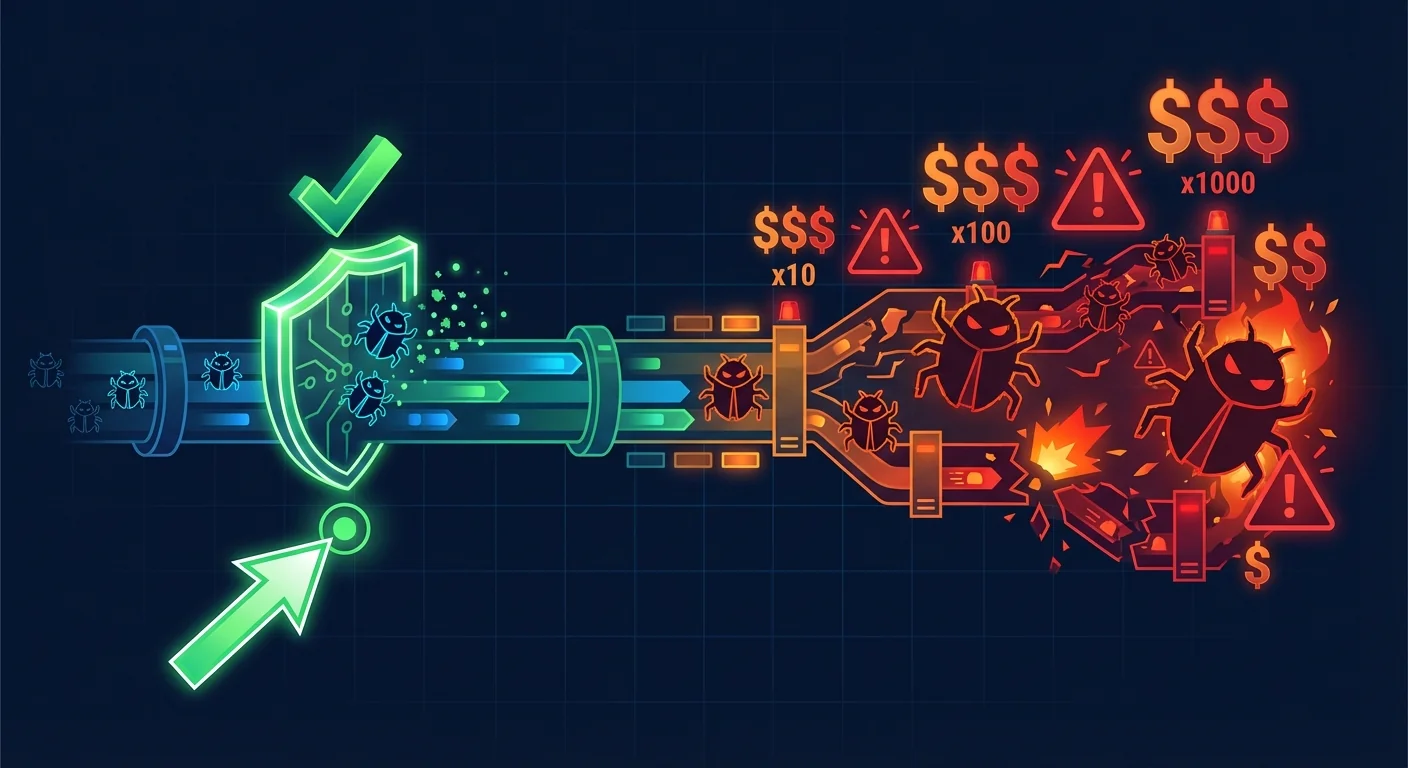

The pipeline should enforce quality automatically at every stage. No human should need to remember to run tests — the system refuses to ship untested code.

The four CI/CD gates:

| Gate | When | What runs | SLA | If it fails |

|---|

| PR check | Every pull request | Unit tests + integration tests + linting | Under 5 minutes | PR cannot be merged |

| Pre-deploy | After merge to main | Full E2E suite | Under 15 minutes | Deploy is blocked |

| Post-deploy | After production deploy | Smoke tests | Under 2 minutes | Auto-rollback |

| Scheduled | Nightly | Performance tests + security scans | Under 1 hour | Alert on findings |

Key principle: Tests that block deploys must be fast and reliable. If your E2E suite takes 45 minutes, developers will bypass the gate. If 10% of tests are flaky, the team ignores failures. Invest in speed and stability.

5. Testing in development environments

Give developers the ability to run integration tests locally or in ephemeral environments. If testing requires deploying to a shared staging server, developers won't test until the story is "done."

Setup: Ephemeral environments per PR (Vercel preview deploys, Firebase preview channels, or Docker Compose for backend services). Every PR gets its own isolated environment with its own database state.

Impact: Developers catch integration issues during development instead of during code review or QA testing. At Globalbit, we've seen this reduce the "works on my machine" problem by 80% on projects where ephemeral environments were implemented.

Measuring shift-left effectiveness

These are the metrics that prove shift-left is working:

| Metric | Before shift-left (typical) | After shift-left (90 days) | Target |

|---|

| Defect escape rate | 30-50% | 10-15% | Below 10% |

| Bug fix cost (median) | ₪5,000 | ₪1,200 | Below ₪1,000 |

| PR cycle time | 3-5 days | 1-2 days | Under 2 days |

| Production incidents/month | 8-12 | 2-4 | Below 3 |

| Deploy frequency | Weekly | Daily | Daily+ |

Two common misconceptions these numbers address: shift-left doesn't slow down deployment frequency (it actually increases it), and the investment in earlier checks pays for itself within 60-90 days through reduced incident costs.

The organizational resistance (and how to handle it)

"We don't have time to write tests"

The team that doesn't write tests spends 30-40% of sprint capacity on bug fixes and incident response. That's the time they're looking for. Shifting even 10% of sprint capacity from reactive bug fixing to proactive testing produces a net positive within two sprints.

"Our QA will catch it"

QA at the end of the pipeline catches 60-70% of bugs. That sounds good until you calculate the cost of the 30% that escapes and the cost of finding bugs after the developer has moved to the next story. Shift-left doesn't replace QA. It reduces the volume and severity of what QA needs to catch.

"We'll add tests later"

No one ever adds tests later. We've audited 200+ codebases. The ones that said "we'll add tests after launch" have the lowest test coverage and the highest defect rates 12 months later. Test now or accept permanent technical debt.

FAQ

What's the minimum shift-left setup for a startup?

Pre-commit hooks (linting + type checking) + 5 smoke tests on every deploy + quality criteria in sprint planning. This takes 1-2 days to implement and catches 40% of bugs before they leave the developer's machine.

How do we shift left without slowing down developers?

Make the fast tests fast. Pre-commit hooks should take under 10 seconds. PR checks under 5 minutes. If your CI pipeline takes 30 minutes, fix the pipeline speed before adding more tests. Developers don't resist testing — they resist waiting.

Does shift-left work with AI-generated code?

It's even more important. AI-generated code ships faster, which means bugs reach production faster if you don't have early quality gates. Add AI-specific security scanning to your PR checks and mutation testing to your nightly suite. We specialize in testing pipelines for AI-era development.

What if we have zero tests right now?

Start with the smoke test layer from this article plus pre-commit hooks. Don't try to go from zero to comprehensive in one sprint. The 90-day buildout path in our QA team building guide gives you a week-by-week plan. Or talk to us — we've built QA from zero many times.