TL;DR: Nearly half of all new code is now AI-generated through tools like Copilot and Cursor. Studies show this code has 1.7× more bugs and 1.57× more security vulnerabilities than human-written code. Production incidents rose 23.5% in 2025 while shipping speed jumped 20%. The problem isn't AI. The problem is that your QA process was designed for a world where humans wrote every line. Here's what needs to change.

The speed trap nobody warned you about

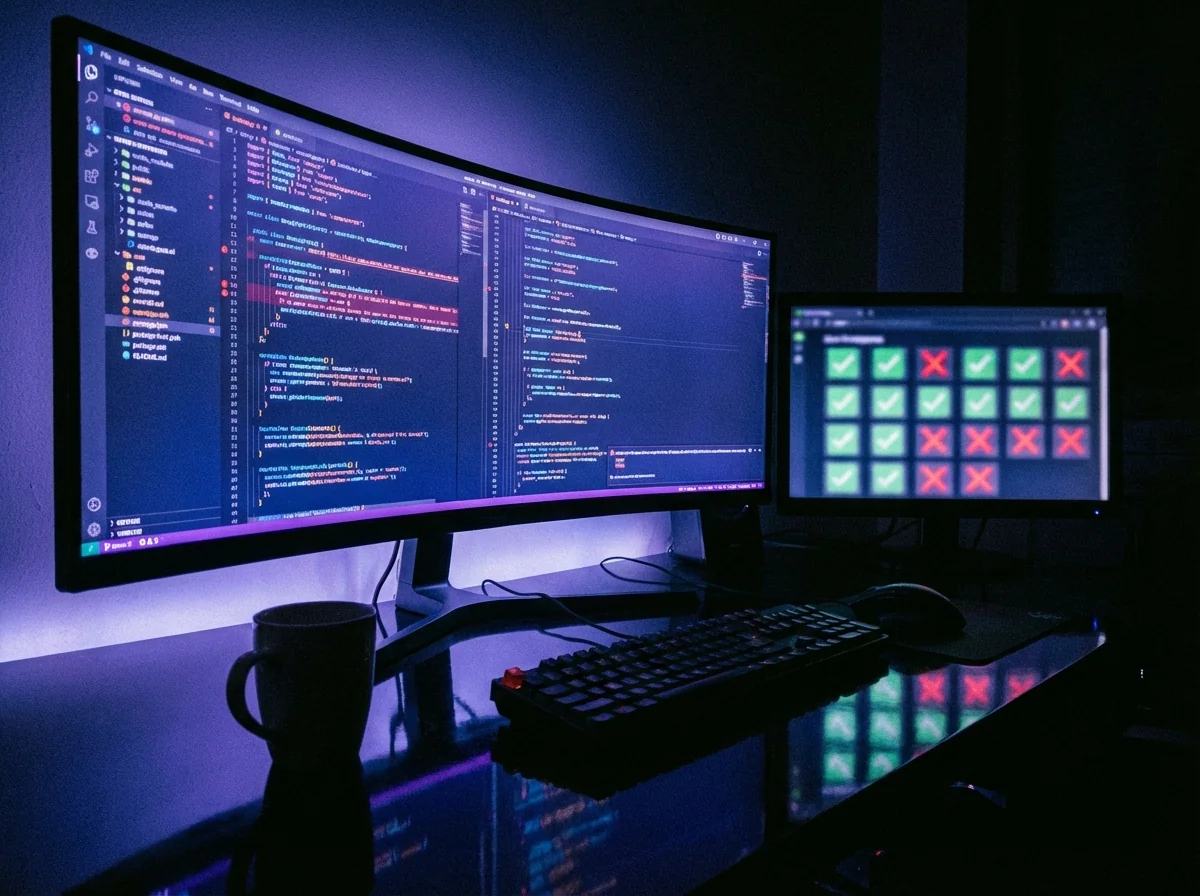

Something shifted in 2025. Teams started shipping 20% more code, and production incidents climbed 23.5%. The correlation isn't accidental.

The cause: vibe coding. Developers accept AI-generated code without detailed line-by-line review, iterate through prompts, and ship. Collins named it the Word of the Year. By early 2026, 92% of US developers use AI coding tools daily, and 46% of new code is AI-generated.

The output feels productive. The velocity dashboards look great. But the code itself tells a different story.

An analysis of code shipped in late 2025 found that AI-generated code contains 1.7× more bugs, logic errors, and security vulnerabilities than human-written code. Not edge cases. Real production issues: hardcoded passwords, SQL injection, improper authentication. One study found over 2,000 vulnerabilities in just 5,600 publicly available vibe-coded applications.

We see this at Globalbit every time a new client comes to us after an AI-accelerated launch. The codebase looks clean. It passes linting. The architecture seems reasonable. But under the surface: inconsistent error handling across modules, security patterns that look correct individually but contradict each other, and business logic that works for the happy path but breaks at every edge case.

Why your existing tests miss AI-generated bugs

The bugs are different now

AI-generated code doesn't fail the way human-written code fails. Human bugs tend to be typos, missing null checks, and off-by-one errors. Your test suite was probably built to catch these patterns.

AI bugs are more subtle. The code is syntactically correct, passes linting, and looks reasonable on review. But it hides logic errors that only surface under specific conditions. 29.1% of Python code generated by Copilot contains potential security weaknesses. AI-authored code has 75% more misconfigurations than human-written equivalents.

Test coverage doesn't mean what it used to

A team can have 80% coverage and still miss the most dangerous AI-generated bugs. Why? Because coverage measures which lines execute during tests, not whether the tests verify the right behavior. AI code tends to create plausible-looking functions that handle the common case and silently break on edge cases that a human developer would have anticipated.

At Globalbit, when we audit codebases with significant AI-generated content, we typically find that 30-40% of existing tests are effectively decorative. They pass, they add to coverage numbers, and they catch nothing meaningful.

Your QA team wasn't trained for this

Most QA engineers learned to test human-written code. They know to check boundary conditions, look for race conditions, and verify error handling. That's still necessary. But AI-generated code introduces a new category: code that's internally consistent but architecturally wrong. The function works. It just shouldn't exist, or it duplicates logic that lives elsewhere, or it implements a pattern that contradicts how the rest of the system handles the same scenario.